AI Governance

AI Governance Basics: Why It Matters Now

Artificial intelligence is becoming part of almost everything we use. It helps write emails, review resumes, detect fraud, and even support doctors. Many organizations are adopting AI because it saves time and improves decision-making. But the risks are not always obvious at first.

A company once used an AI tool to screen job applications to speed up hiring. After some time, they noticed that certain candidates were being rejected more often. No one had set that rule. The system had simply learned patterns from past hiring data and continued them. This is how AI problems usually appear. Not as a sudden failure, but as something that quietly goes wrong over time.

This is where AI governance comes in. It is not just about rules or compliance. It is about making sure AI behaves the way we expect it to. From a cybersecurity point of view, if a system is not properly controlled, it becomes a risk, no matter how useful it is.

What AI governance really means

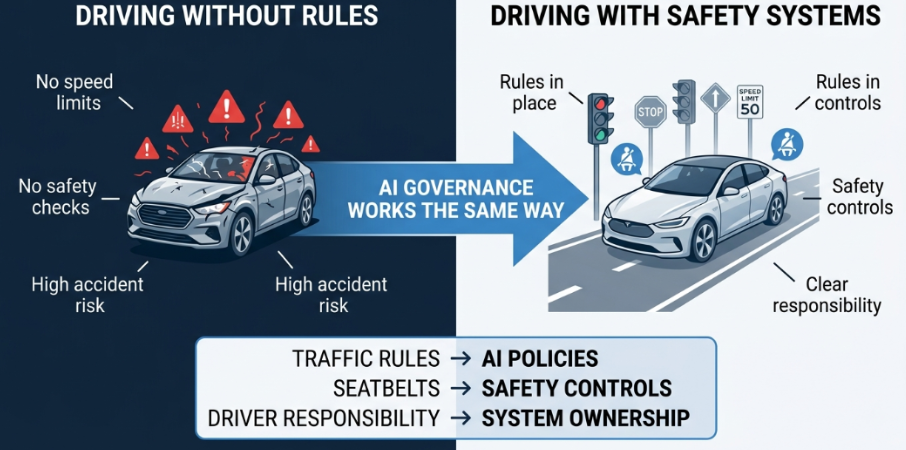

A simple way to understand AI governance is to think about driving a car. Cars are powerful and useful, but they only work safely because there are traffic rules, signals, and safety systems in place. Without those, even good drivers would struggle, and accidents would be common.

AI works in a similar way. It is powerful, but without structure, it can create confusion. AI governance is that structure. It defines how systems are built, how decisions are checked, and who is responsible when something goes wrong. It makes sure there is control instead of guesswork.

For example, consider a bank using AI to detect fraud. If the system starts blocking genuine transactions and no one understands why it is happening or who is responsible for fixing it, it creates confusion and risk; it creates more problems than it solves. This is similar to a security system generating alerts without proper visibility or ownership. Governance ensures there is clarity, accountability, and control. Governance ensures there is clarity, ownership, and a way to fix issues quickly.

Why this matters more today

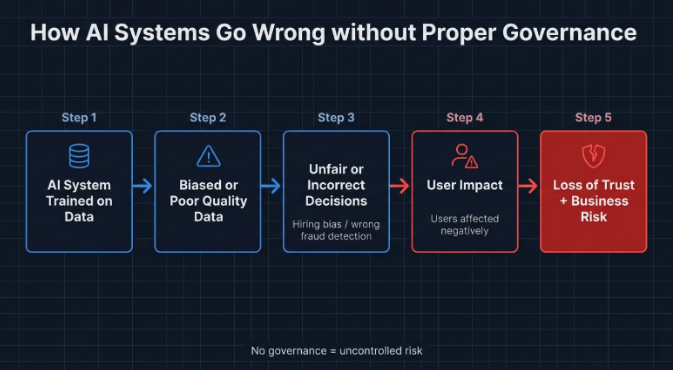

AI systems learn from data, unlike traditional software, which follows fixed instructions. This makes them flexible, but also harder to predict. Small issues in data can turn into larger problems once the system is in use.

Take hiring systems again. If most of the training data comes from one type of candidate, the AI may start favoring similar profiles. It does not stop or raise an alert. It simply continues making decisions that look normal on the surface but are actually biased. Over time, this becomes a serious issue.

Another issue that is becoming common is what many teams now call “shadow AI.” Employees start using external AI tools on their own to speed up work, such as summarizing documents or generating content. In some cases, sensitive company data gets entered into these tools without proper approval. Once that data leaves the organization, it becomes difficult to track or control. This creates a risk that is very similar to data leakage in traditional systems.

AI mistakes are public mistakes

One important difference with AI is how visible failures can be. When traditional systems fail, the issue often stays internal. With AI, problems tend to become public very quickly.

There have been cases where chatbots released to the public started generating harmful or inappropriate responses. In other situations, AI systems made incorrect decisions that directly affected users. These issues spread quickly online and damage trust.

In many ways, this is similar to a security incident becoming public. The technical issue matters, but the loss of trust often has a bigger impact. This is why governance needs to be in place before systems are widely used.

Governance is not only for big companies

It is often assumed that only large organizations need AI governance, but that is not true. Even smaller teams are using AI in ways that affect real users. For example, a small startup using AI to recommend products may unintentionally show biased results or expose user data. The scale may be smaller, but the impact still matters. Users will still lose trust if the system behaves incorrectly.

Starting early actually makes governance easier. Simple steps like understanding what data is used, who owns the system, and how decisions are made can prevent larger problems later.

The goal is trust

At the end of the day, AI governance is about trust. Without trust, even the best systems will not be used. Think again about the car example. People trust driving systems because there are clear rules, safety measures, and accountability. Without those, no one would feel safe on the road. AI works in the same way. Trust comes from knowing that the system is controlled and reliable.

If users trust the system, they will use it. If organizations trust their systems, they can depend on them more. That trust is built through consistent governance, not assumptions.

Conclusion

AI is powerful, but it does not manage itself. Without proper control, small issues can grow into larger risks over time. Governance helps keep systems reliable while still allowing innovation to grow.

As AI becomes more common in everyday systems, having this structure in place is no longer optional. It is something every organization will need to focus on. In the next blog, we will look at the main AI governance frameworks and how they are used in practice.

Did you find this article helpful?

Let the authors know by leaving a like or comment.

No comments yet

Be the first to share your thoughts!